- Home

- / Insights

- / FTI Journal

How Creative Web Scraping Played a Key Role in a Medicaid Arbitration Case

-

February 28, 2019

-

It started with a simple question. An arbitration panel asks a statistics expert to testify about the concept of “acceptable statistical precision” in a routine healthcare audit. Who knew that providing an answer would require thinking like a cyberthief?

The unusual story begins with an arbitration case involving a large commercial payor and an audit of alleged overpayments by New York Medicaid. During cross-examination, the panel tried to pin down an FTI Consulting statistics expert with questions related to the “typical” and “reliable” levels of precision in similar healthcare audits. The panel’s questions implied that there was in fact some threshold for “typical” and “reliable” levels of precision for overpayment extrapolations.

After reviewing several means for determining the answers, one stood out: The New York Office of the Medicaid Inspector General (OMIG) regularly performs statistical sampling to assess alleged overpayments. These sampling reports are online and publicly available. All an analyst would have to do is collect the reports, crunch the numbers and assess a commonly acceptable threshold.

It turned out to be anything but straightforward. There are thousands of OMIG reports. The information they contained had never been collected into a single, structured dataset. Conducting a detailed, comprehensive analysis of the data would be like rifling through a thousand phonebooks to find all the residents of Main Street.

Like a Hacker in the Night

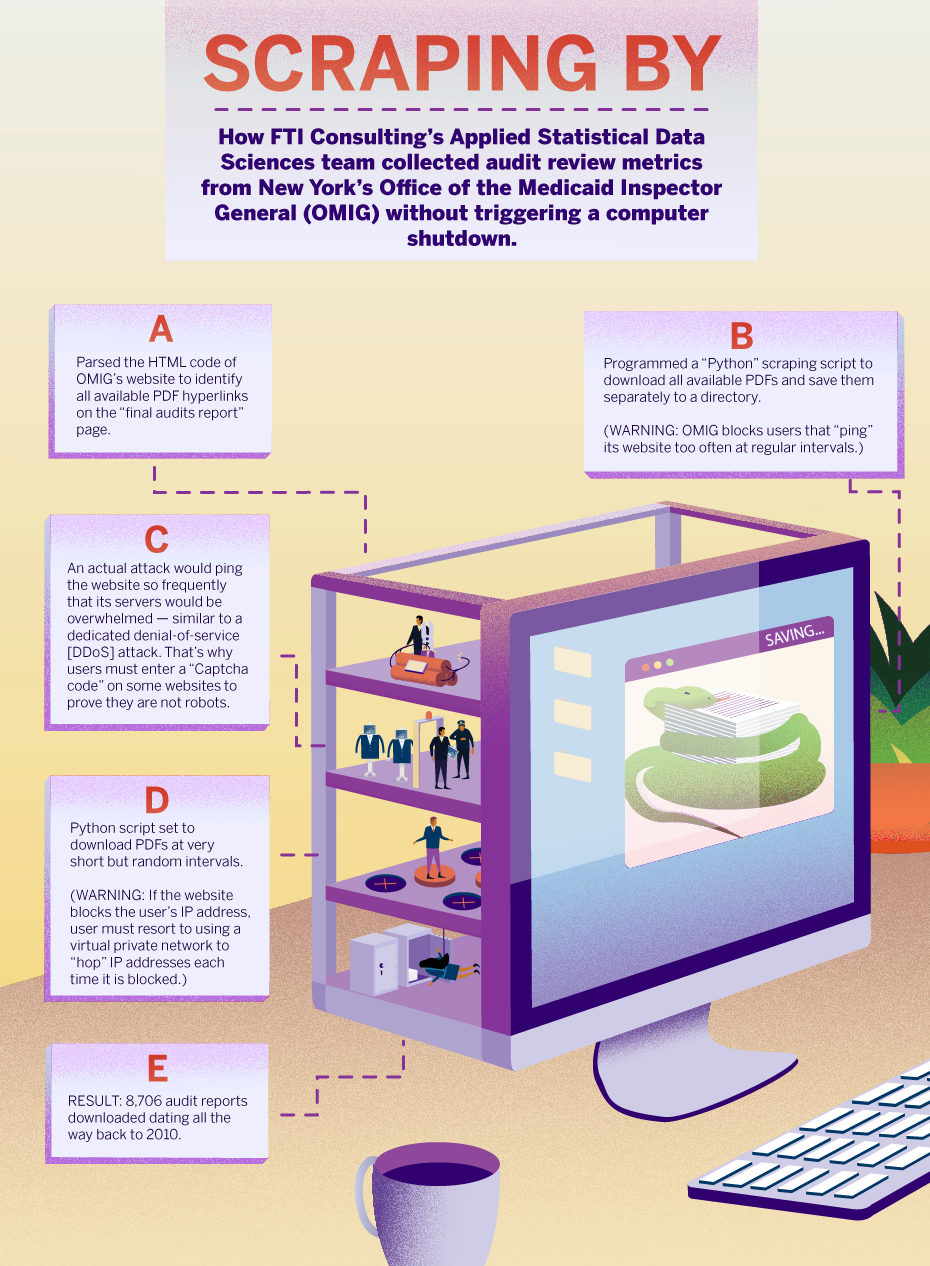

Enter FTI Consulting’s Applied Statistical Data Sciences team. With a goal of compiling a dataset of Medicaid audit review metrics for providers in New York State, the team approached OMIG’s website with strategic discretion. Its mission was to download hundreds of the state’s audit summaries (available in PDFs) without regularly pinging the website, which would block the team’s IP address — much like a website locks out users when a dedicated denial-of-service (DDos) attack occurs.

Getting blocked would have forced FTI Consulting analysts to use a far more laborious and time-consuming process to complete its mission.

The team began by employing a computer program (or “script”) similar to OMIG’s to operate within the website environment. Then they hit the return key.

Like a hacker in the night, the program identified the summary PDFs and downloaded 8,706 audit reports dating back to 2010. With the reports in hand, FTI Consulting’s analysts parsed the data in each PDF and searched using key terms to identify whether the statistics of interest were available within each report. The team then scraped the relevant information, assembled it into a database and reviewed for accuracy.

Though the operation had the earmarks of a cyberattack, it was completely within the law since it only scraped public information.

A Feat Unto Itself

What did the analysts discover? One might say a mess: Turns out the reports showed no indication of any thresholds or requirements for a precision level deemed appropriate or reasonable for purposes of extrapolating a sample result to a population.

In the end, FTI Consulting’s ability to create a first-of-its kind dataset to be used to examine precision levels of the OMIG in hopes of finding an historically “acceptable” level of precision was a feat unto itself.

When the arbitration panel’s questions were put to FTI Consulting’s statistic expert, he replied that that the concept of “acceptable statistical precision” could not be answered by the study of the statistics — and that it cannot in fact be answered through New York Medicaid audits.

The infographic below provides more detail about the approach that FTI Consulting’s Applied Statistical Data Sciences team took in collecting data and compiling their dataset. For a look at the key data sets from FTI Consulting’s NY OMIG audit, see the whitepaper Healthcare Audit Metrics.

The views expressed herein are those of the author(s) and not necessarily the views of FTI Consulting, Inc., its management, its subsidiaries, its affiliates, or its other professionals. Copyright © 2019. All rights reserved.

About The Journal

The FTI Journal publication offers deep and engaging insights to contextualize the issues that matter, and explores topics that will impact the risks your business faces and its reputation.

Published

February 28, 2019